【Background of Service Offering】

As the development of proprietary LLMs (Large Language Models) and the implementation of agent AI accelerate, the value criteria for AI infrastructure are evolving. It is no longer just about securing computational resources; the key consideration is how easily they can be used and how effectively their performance can be maximized.

GMO Internet responded early to this trend and, in 2024, began offering a no-build-required Slurm environment as a managed HPC cluster service, powered by the then-latest “NVIDIA H200 GPU.” Furthermore, in 2025, to address the growing demand for inference, it launched a bare metal service suitable for a wide range of use cases. By providing HGX B300—enhanced for inference use, including support for FP4 to accelerate inference processing—at one of the fastest levels in Japan (*1), GMO Internet has supported the development of the domestic AI industry.

Compared to the previous generation, HGX B300 also delivers high performance for training workloads. To meet ongoing strong demand for training and customers’ needs for integration into HPC clusters, GMO Internet has now added HGX B300 to the lineup of its dedicated plan for managed HPC cluster services.

(*1) GMO GPU Cloud began offering “NVIDIA HGX B300” cloud services at one of the fastest levels in Japan (as of December 2025) https://internet.gmo/news/article/122/

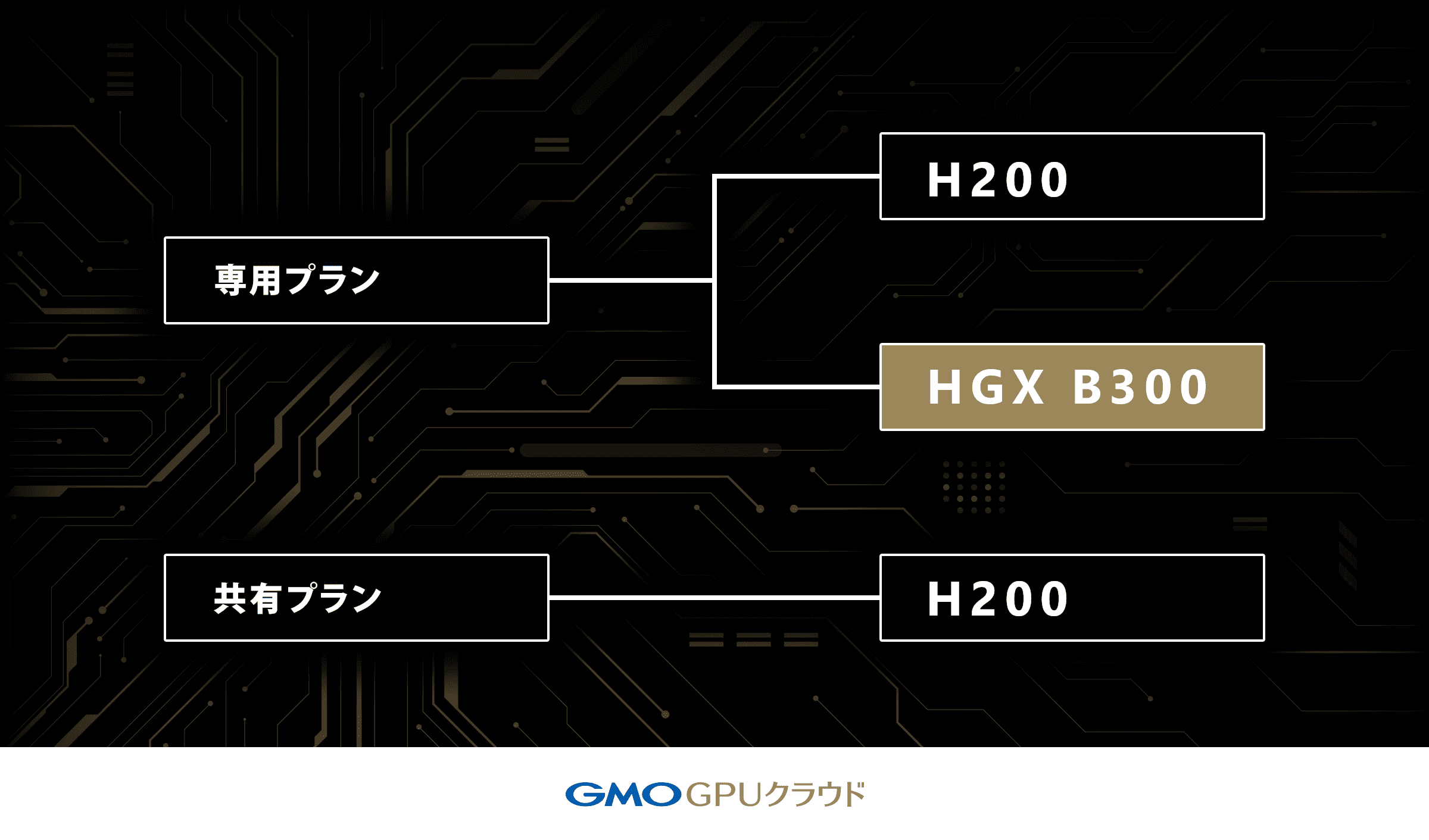

■Addition of HGX B300 to the “Dedicated Plan”

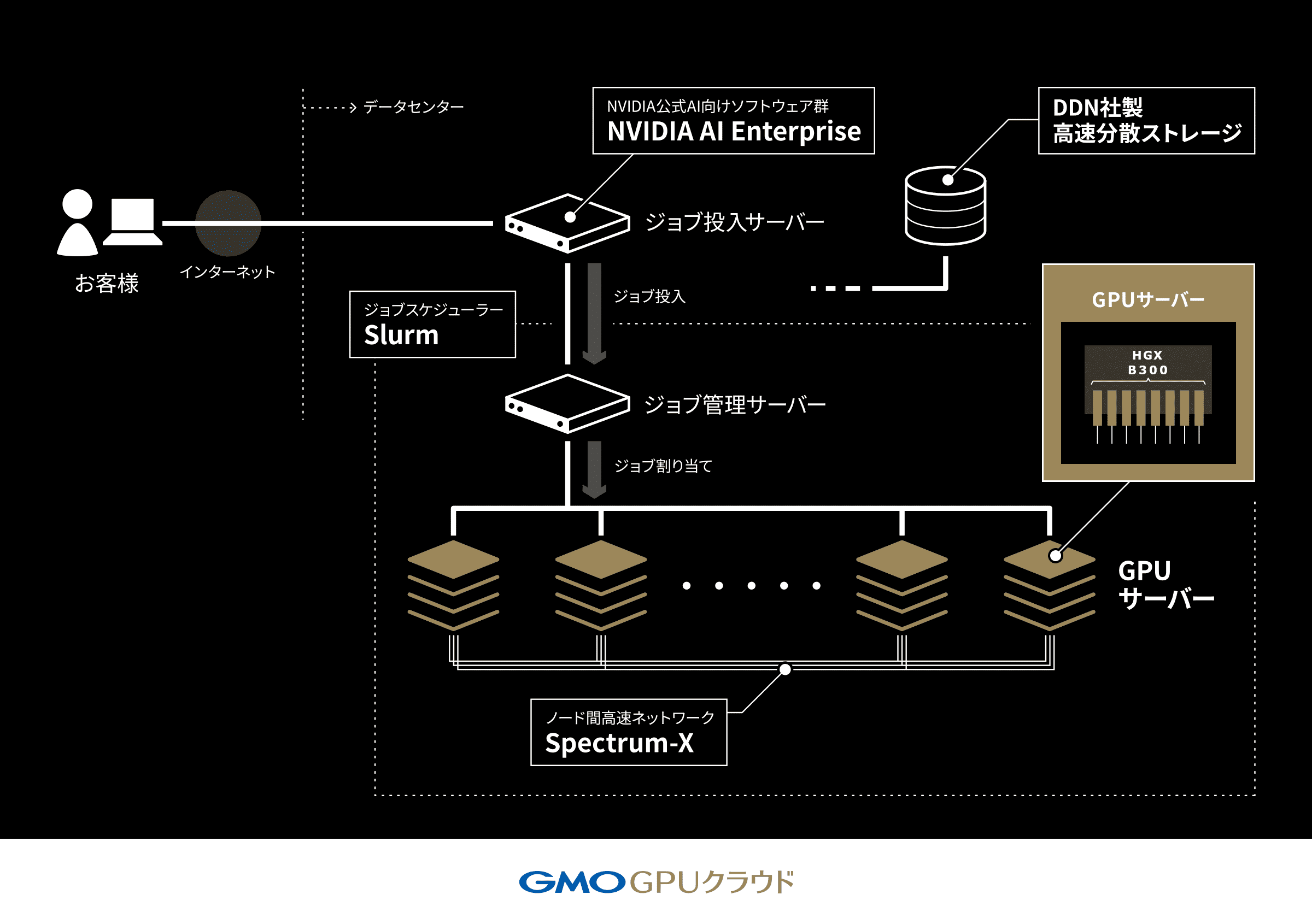

By adding HGX B300 to the dedicated plan lineup, we can now provide customers who want to conduct training and development using the latest HGX B300, while reducing the time spent on infrastructure setup, with a fully pre-configured AI development platform. This service adopts an NVIDIA-recommended configuration, incorporating the AI-focused Ethernet networking platform “NVIDIA Spectrum-X” and high-speed distributed storage from DDN (DataDirect Networks, Inc.). As a result, users can maximize the performance of HGX B300 immediately after starting to use the service, without the need for complex setup or tuning, thereby accelerating their workloads.

【About “GMO GPU Cloud”】

(URL:https://gpucloud.gmo/)

1. About the “GMO GPU Cloud” Dedicated Plan

1.“GMO GPU Cloud” offers a dedicated plan that allows exclusive use of GPU server resources according to usage requirements. Rather than sharing GPU servers among multiple users, contracted resources can be used exclusively, enabling a wide range of high-performance use cases without being affected by congestion.

■Key Features:

・Pre-configured Slurm execution environment (GPU software stack installed and optimized)

・Adoption of NVIDIA-recommended configuration

・Pre-configured interconnect network (local network also available)

・Visualization and operational support via management dashboard

・High-speed distributed storage

Under centrally managed resource control with Slurm, this enables maximization of organizational workload efficiency and accelerates AI development cycles.

■Dedicated Plan Configuration Image

2.Overview of the Dedicated Plan Service

| Service Name | GMO GPU Cloud |

|---|---|

| Service Launch Date | November 22, 2024 |

| Use Cases | ・High-speed training and fine-tuning of Large Language Models ・Training computer vision models using large-scale datasets, etc. |

| Pricing | Quoted individually for each company |

| Maximum Number of Users | 100 |

| Local Storage | Free, 30 TiB per unit (for temporary use during job execution) |

| Home Storage Area | Free, 100 GiB per user |

| High-Speed Shared Storage | JPY 30,000/TiB per month (1 TiB–100 TiB) |

3. Available GPU Options

| NVIDIA HGX B300 | NVIDIA HGX H200 | |

|---|---|---|

| GPU Memory | Total 2.3TB (288GB HBM3e ×8) | Total 1.1TB (141GB HBM3e ×8) |

| CPU Configuration | Intel Xeon 6767P x2 | Intel Xeon Platinum 8480+ x2 |

| Main Memory | 3 TB | 2 TB |

| Scratch Space | 14 TB | 14 TB |

| FP4 Tensor Core | 144PFLOPS / 108PFLOPS | - |

| FP8 Tensor Core | 72PFLOPS / 36PFLOPS | 32PFLOPS / 16PFLOPS |

| FP16 Tensor Core | 36PFLOPS / 18PFLOPS | 16PFLOPS / 8PFLOPS |

| FP32 | 600TFLOPS | 540TFLOPS |

| Interconnect | NVIDIA ConnectX-8 SuperNIC ×8 Total 6,400Gbps | NVIDIA BlueField-3 SuperNIC ×8 Total 3,200Gbps |

| NVLINK (Inter-GPU Bandwidth per GPU) | 5th Generation (1,800 GB/s) | 4th Generation (900 GB/s) |

【Future Outlook】

GMO Internet will contribute to technological innovation in the rapidly evolving fields of AI and robotics by providing cutting-edge AI infrastructure through “GMO GPU Cloud.” Going forward, by swiftly delivering the latest AI computing platforms and building flexible cloud environments tailored to customer needs, we will continue to contribute to the creation of AI innovation in society and industry as an essential domestically developed AI infrastructure for Japan’s AI industry.

■Past Reference Releases

・June 11, 2024: GMO Internet Group becomes the first cloud provider in Japan to adopt NVIDIA Spectrum-X for a generative AI GPU cloud service

https://www.gmo.jp/news/article/9005/

・November 22, 2024: Launch of “GMO GPU Cloud,” ranked in the TOP500 supercomputer ranking

https://internet.gmo/en/news/article/27/

・August 4, 2025: “GMO GPU Cloud” adopts NVIDIA Blackwell Ultra GPU

https://internet.gmo/en/news/article/71/

・Other related releases can be found here

https://internet.gmo/en/news/article/163/

【About GMO Internet, Inc.】

GMO Internet, Inc. is a comprehensive internet company that operates Internet Infrastructure businesses such as domains, cloud and rental servers, and internet connectivity, as well as Online Advertising and Media. Under the corporate catchphrase “Internet for Everyone,” we support social infrastructure by contributing to the realization of a safe and secure internet society and creating new value for the future with AI, delivering “Smiles” and “Inspiration” to everyone involved.